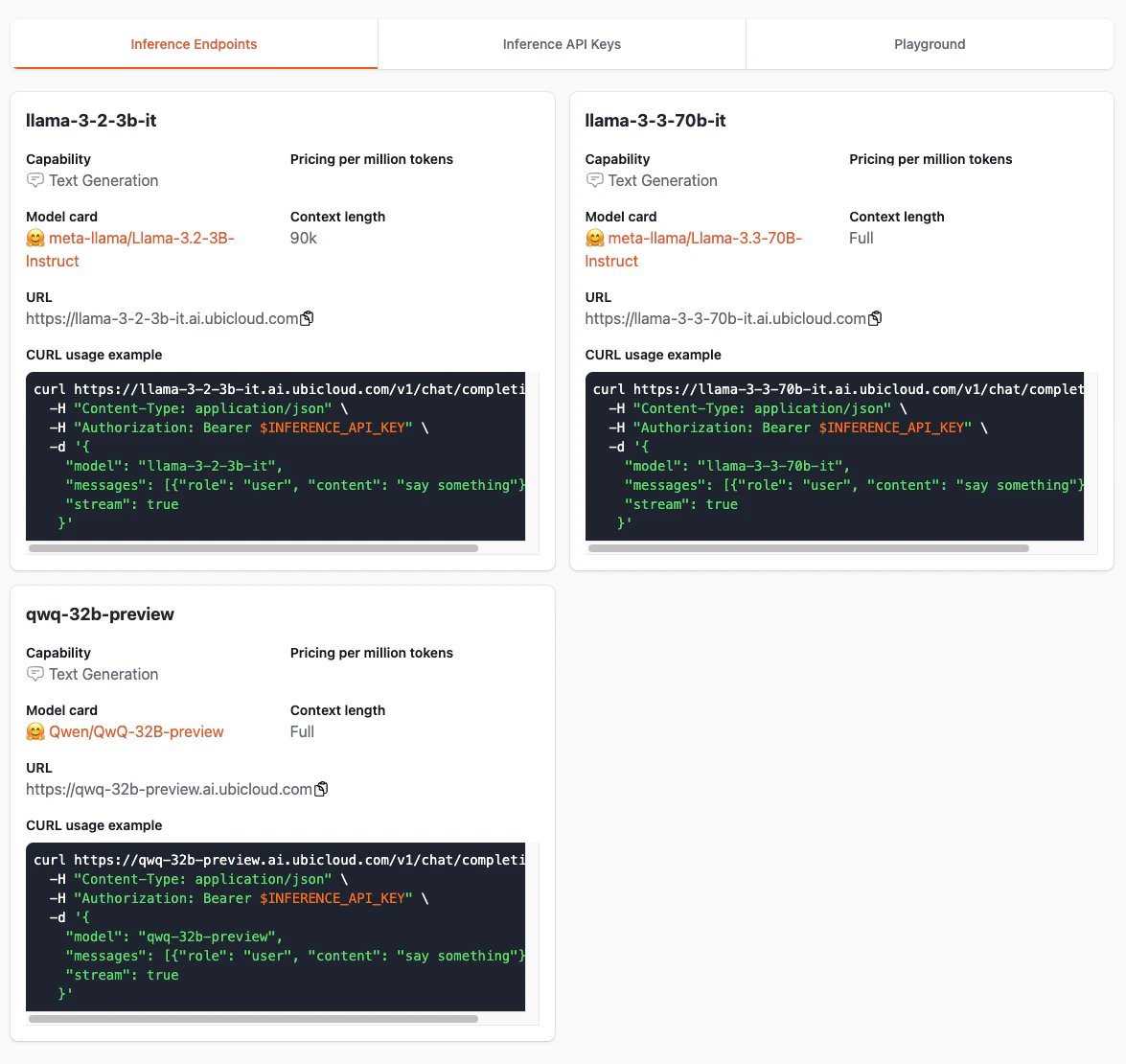

Inference Endpoints offer OpenAI-compatible APIs, enabling effortless integration with existing systems while reducing the likelihood of vendor lock-in. Privacy is a top priority for inference endpoints, as they neither store nor reuse user data. Built on open-source, inference endpoints provide transparency in their operations and functionality. The Inference Endpoints tab offers insights into usage, pricing, and the capabilities of each available endpoint.Documentation Index

Fetch the complete documentation index at: https://ubicloud.com/docs/llms.txt

Use this file to discover all available pages before exploring further.